How AI can Revolutionise the Game Industry

When Lee Sedol, the world champion Go player, defeated the AI AlphaGo in their fourth match, the people of South Korea rejoiced.

Go, an ancient strategy board game, is integral to South Korean culture and Sedol is one of the greatest players in the country’s history, but he had already lost the five-game series against AlphaGo, having resigned from the first three matches. The South Koreans didn’t care – he was the human champion who had scored a win against an AI that had seemed omnipotent at the ‘most complex game devised by man.’

Sedol lost the fifth game too and three years later in 2019, he retired from the professional circuit, stating that even if he was number one, there was an ‘entity that could not be defeated.’ He now trains other AI Go programs.

AI in the Game Industry: An Overview

Today’s deep-learning neural networks, which mimic human learning patterns, can be trained on vast data sets to achieve superhuman proficiency at any given task – AlphaGo learned to play Go, and then mastered it. Generative AIs use such neural networks to create new content in response to a textual or visual prompt, or even certain contextual cues.

In this blog we will explore various types of AI tol sets that are applicable to game development. Each game contains thousands of models, textures and other assets, and AI can be harnessed to generate these at scale, and at a fraction of the cost and time that is currently spent developing them. We will also discuss companies that are working on, or offering, AI solutions for key parts of the game asset pipeline.

We will also delve into the use of AI in game testing and playtesting for bugs – AI can potentially automate quality assurance. Games have grown bigger and bigger, and quality assurance has become increasingly challenging. AI can help spare developers the thankless, time-consuming task of playtesting and bug-fixing.

AI is thus both a literal and figurative game changer for developers, and in the following sections we deal with the main contexts in which AI is being used to help streamline how games are made – from the creation of game assets to the testing of games in the development phase.

The Generative Revolution in Game Development

According to venture firm Andreessen Horowitz, even small game studios can now finally achieve quality without punitive costs and time, because they can harness generative AI tools to create game content with unprecedented ease.

Generative AI thus holds great promise for gaming because the AAA game industry has a steep barrier to entry – consider the budget, the man-hours, and the crunch behind games like Red Dead Redemption 2 (RDR 2, 2018) and other large-scale games. In fact, RDR 2’s estimated budget of $540 mn comfortably exceeds the most expensive Hollywood film – Pirates of the Caribbean: On Stranger Tides ($379 mn).

To compete with the likes of Rockstar, developers need to find cost-effective tools for the game development pipeline, and even giants like Rockstar or Ubisoft can benefit from such solutions – in fact, Ubisoft is working on both an AI-powered animation system, and an AI bug-fixing tool. Quite a few studios are hence already trying to enhance their workflows with AI, as we will discuss below.

2D Assets and Concept Art

AI-powered programs such as MidJourney, Stable Diffusion and Dall-E 2 can generate high-quality image assets, such as concept art and 2D game content from text prompts and they have already found a place in game asset production – a developer has used these AI generators in tandem, with a professional artist, to create concept art within days rather than weeks.

These aren’t enterprise tools – they are available to enthusiasts as well, and Youtube has videos on how to generate concept art or any type of 2D image using such AI generators for free.

Ludo, in turn, is a company which offers an image generation solution geared for studios. Ludo is an AI-powered game ideation and creation platform that is intended to streamline the creative process of game development, and one of the ways it helps game developers is by using Stable Diffusion to generate images during the ideation phase, and even create high-quality 2D artwork and assets further down the pipeline.

In fact, the content created by Ludo’s image generator can be fine-tuned to the studio’s needs. Developers can use keywords to generate game images, icons and even more detailed assets like character concepts, in-game items and more. The image generator can also transform one image to another, essentially creating variations of the input, or rendering it with different art styles. Developers can also condition Ludo to use specific colours, styles and themes to get results that are consistent with their art design.

As is perhaps evident, AI-powered 2D art generation is quite mature already, and can be deployed not just by studios but even by hobbyists who want to use these tools to generate images, or even use such images as references for their original artwork.

3D Artwork and Models

AI-generated 3D artwork is yet to be wholly integrated into the asset creation pipeline, but Nvidia is to some extent leading the charge on this aspect of game development with its Omniverse. In fact, the very purpose of the Omniverse, per Nvidia, is to help individuals and teams develop seamless, AI-enhanced 3D workflows.

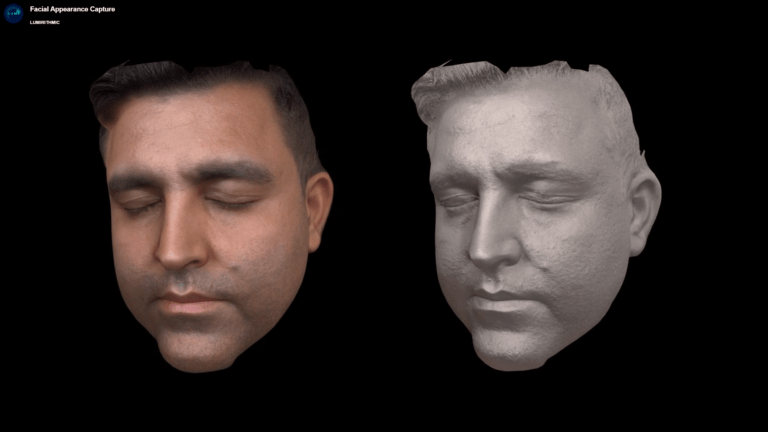

Developers using the Omniverse can deploy Lumirithmic to generate high-fidelity, movie-grade 3D head models from facial scans. Lumirithmic is a scalable solution that can be used in just about any digital content creation pipeline.

Elevate3D uses 360-degree videos of products to make highly-accurate 3D models, which can then be used in product presentations, demos and even animations. In this video, the user captures a 360-degree video of a hair-care product on a turntable using their cellphone, and feeds it to Elevate3D – and that’s all it takes to create a full 3D model that can be modified and rendered inside a 3D application. Elevate 3D could be perfect for the creation of in-game props and items, especially for realistic games set in the present – a game like Grand Theft Auto V (2013) has countless items and props, and Elevate3D could allow the studio to devote more time to hero assets, which the player will focus on and interact with, rather than working on mundane props that flesh out the game world.

Perhaps the most tantalising Omniverse solution is Get3D, an AI tool that can generate detailed models with textures using just 2D images, text prompts and random number seeds. The tool can also generate variations for its models, apply multiple textures to a model on the fly, and can even interpolate between two generated models – for example, morph a fox into a dog and then into an elephant and so on.

One can only imagine what the industry could achieve with something like Get3D – the morphing feature can prove incredibly powerful in asset creation – imagine a game world where every creature is generated at runtime from a single 3D base. Currently, game models are carefully constructed and textured by hand, and then optimised for use in-game. If Get3D matures into a scalable solution, developers could spend their time on experimenting with every imaginable 3D creature concept and merely feed it into Get3D to get an entire ecosystem of creatures into the game.

Level Design and World Building

Level design and world building have become increasingly relevant – and challenging – as game worlds have grown larger and more complex. One of the more promising companies in this space is Promethean AI. The company aims to address the challenge of creating large and detailed game worlds at scale.

It was founded by Andrew Maximov, a former lead artist who collaborated with hundreds of other colleagues while working on the game worlds of the Uncharted series – a process he characterises as ‘overwhelming’ at times.

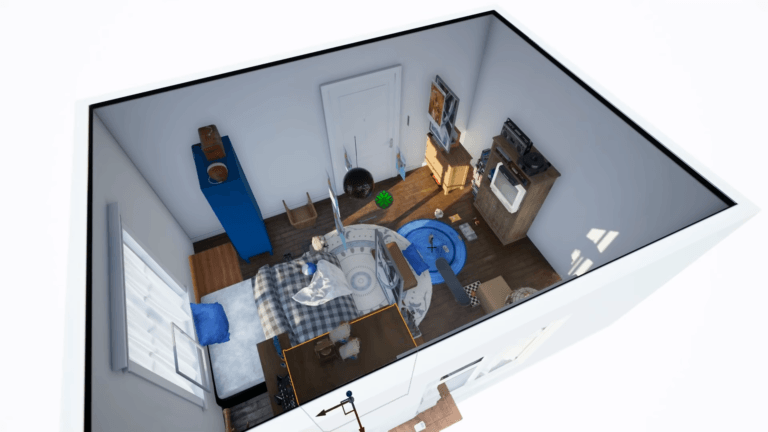

Promethean AI is intended to make world-building a less onerous task, and seems absurdly easy to use. A human artist tells it to build a bedroom, and it does. They then ask it to add a desk, and it does, and so on – the AI keeps plugging pre-created assets into the 3D space as needed and as specified. This patent-pending machine-learning solution spares artists the drudgery of adding and removing 3D assets into the environment, and allows them to concentrate on the virtual world as a whole.

Promethean AI’s output can be fine-tuned to the artist’s style, and can generate much of the game world, allowing the artist to polish and tweak its output to a high-quality game environment. This process is scalable, allowing developers to make larger and larger games. Promethean AI is also integrated into the Unreal Engine, allowing even enthusiasts to experiment with its capabilities.

Promethean AI can replace and improve upon procedural generation – a core component of games like No Man’s Sky (2016). Procedural techniques enabled indie developer Hello Games to create a vast space exploration game with limited resources. However, No Man’s Sky did not quite live up to the hype at launch – lacking key features promised by the developer – and incurred severe backlash from gamers. If Hello Games had had a tool like Promethean AI at their disposal when they were building a universe with 256 galaxies to explore, they may well have been ready at launch with all the features they had promised.

Dynamic In-Game Music

Numerous companies are at work making AI music generators that can change tracks on the fly, in real-time – which is perfect for games as in-game music is meant to change based on the context and even transition seamlessly from one track to another, serving as audible cues that tell the gamer what to expect in a given setting.

Activision Blizzard has a solid head start in this department. In 2022, the company patented a new AI-driven music generation system, which goes beyond just randomising music or generating musical cues procedurally.

The AI creates music specific to each player using machine learning trained on contextual data such as the player’s actions, their in-game behaviour patterns, their skill level and the in-game situation. Most games do use specific musical cues for different contexts (combat music vs exploration music in a game like The Witcher 3), but Blizzard’s AI can create new music (or at least variations on a theme) for any in-game context based on the data on which it is trained. The AI can even modulate the music tracks’ beat, tempo, volume, and length based on the player’s actions.

Realistic AI-Based Animations

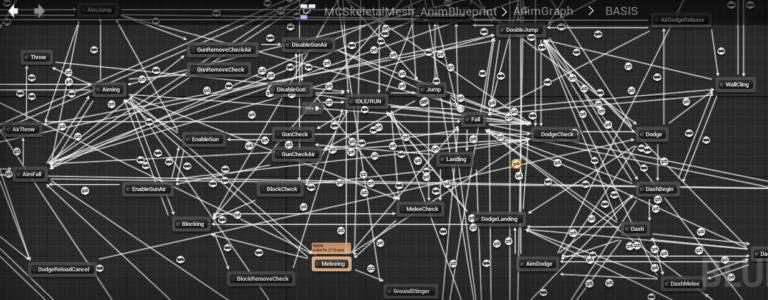

Several companies in the game industry are working hard on streamlining the process of creating seamless animations – many games suffer from stiff transitions, awkward rag-dolls and other immersion-breaking glitches because animation for video games is complex, and constrained by hardware limitations as well.

Move.ai is an application that allows for motion capture (mocap) in any setting using any camera, including phone cams, and uses deep learning to digitise the motion capture into an animation. It is also integrated with the Omniverse – developers in nVidia’s ecosystem are spared at least some of the complexity in animating game characters.

Perhaps Electronic Arts’ in-house tool HyperMotion constitutes the most robust use of machine learning for animation creation. EA essentially made 22 professionals play football in mocap suits and fed 8.7 million frames of motion capture into a machine learning algorithm that then learned to create animations in real time, thereby making every interaction on the field realistic. The ML-Flow algorithm’s animations allow players to strike and control the ball with complete ease and precision.

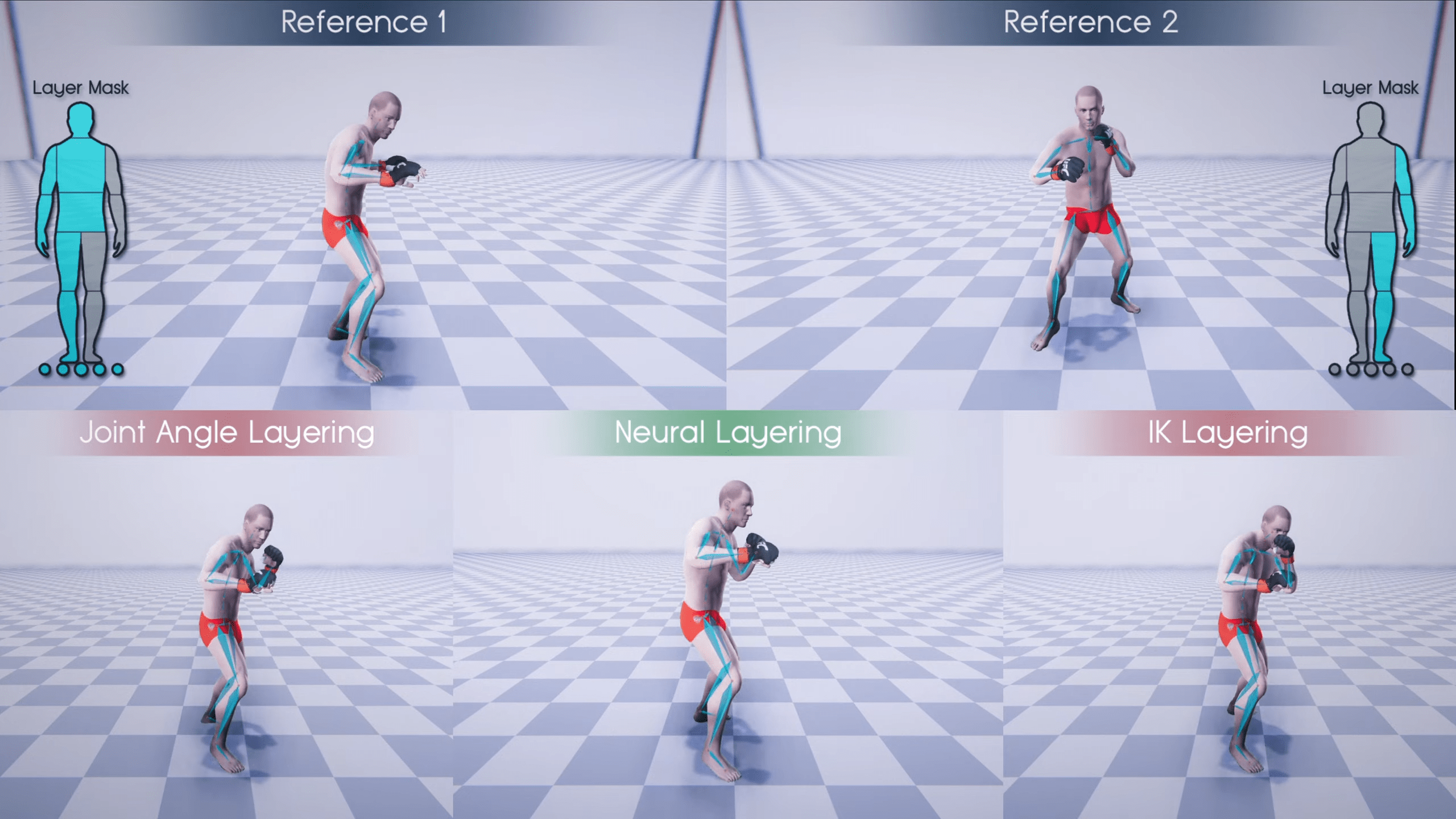

EA’s researchers are also working on a deep-learning solution for fluid movements and transitions for particularly challenging animations, such as martial arts manoeuvres. As is evident, a martial arts game or even a Mortal Kombat game will absolutely break if its animations are crude, and thus requires animators to carefully edit, mix, blend and layer motion sources to produce seamless animations. The deep learning framework is meant to automate this manual layering using neural networks.

Ubisoft is also trying to solve the problem of creating seamless animations at scale. The company’s Learned Motion Matching System uses AI to improve animations created by using motion capture as a base. Motion matching is behind some of the best animation systems achieved in games, and it essentially allows mocap to be utilised in creating realistic game animations. Mocap can be highly detailed and realistic, but is raw, unstructured data – a digital recreation of movement, like a person walking in a circle, cannot simply be plugged into a game’s animation system without manual editing.

Motion matching is the process by which such data is translated into other movements – like making the character walk back and forth, instead of in circles. This is done by hand-picking motion data and adding various subtle tweaks manually to interpolate mocap data with other animations.

Motion matching systems, however, can hog system memory, and also scale poorly. Ubisoft’s Learned Motion Matching uses machine learning not only to automate motion matching, but also allow it to scale without straining memory resources.

AI in Playtesting and Quality Assurance

Generative AI is an alluring prospect for developers, especially considering the reduction in cost and time in making quality game assets. But AI can also assist in yet another time consuming, costly aspect of game development: quality assurance (QA). As games grow bigger and bigger, QA has become increasingly challenging.

Studios have two options for bug testing or playtesting – using bots or human play testers (or both in tandem). Humans are far better at identifying problems, but are also prone to exhaustion or distraction, because they need to play the same levels over and over again, repeatedly checking for exploits, unexpected behaviours, random instability and more, in a draining process designed to weed out every possible bug in the game. Bots, however, will never get exhausted or distracted, no matter how many times they play a level, and are even scalable, but can’t match a human’s capacity to identify bugs.

The QA testers for Fallout 76 (2018) were essentially put through the grinder because of the game’s troubled development cycle and bad launch. AI can spare humans the thankless task of bug testing, playtesting, and patching buggy code.

AI That Learns to Playtest

EA’s researchers have achieved promising results with AI playtesting by using a technique called reinforcement learning (RL), in which the AI is trained with positive reinforcement – rewarded for desired behaviour and punished for undesired outcomes.

RL agents master games by modelling their actions on the rewards and punishments they receive. Losing territory in Go is a punishment, while gaining ground is the way to victory. In video games, levelling up or killing a boss is a reward, but dying is a punishment. As the RL agent continues to play the game, it rapidly learns to avoid punishment and seek rewards. It soon achieves superhuman proficiency at the game and can start identifying bugs and other in-game problems. However, an AI trained to master a particular game has a very narrow range – it can achieve superhuman results at Go or Dota, but not much else, and cannot playtest another game unless it goes through the exact same process of reinforcement learning all over again.

Researchers at EA essentially used reinforcement learning to make AIs better at playtesting rather than playing, by pitting two sub-AIs against each other. One AI creates levels or environments, and the other tries to ‘solve’ these challenges. The solver is rewarded for successfully completing a task, challenge or level. The AI making the environments is rewarded for creating a challenging level that still remains playable.

The AI’s range is widened – it is trained to generate more and complex levels, and also trained to become more versatile at testing such levels. Essentially, this technique allows a developer to test the game even during the development stage, by letting EA’s AI duo create and test maps based on game assets and code.

EA’s research is still in a nascent stage, and it may be a few years before it is implemented. But a sufficiently advanced AI for playtesting can allow human QA testers to focus on issues that cannot be easily identified by AI. Fallout 76’s QA testers may have been spared a lot of toil if they had had such AI tools at their disposal.

Ubisoft’s Bug-Preventive AI

Ubisoft has taken a different but equally novel approach to bug fixing – squashing them before they are even coded. Ubisost fed its Commit Assistant AI with ten years’ worth of code from its software library, training the AI to identify where bugs were historically introduced, how and when they were fixed, and then predict the time when a coder is likely to write buggy code, essentially creating a ‘super-AI’ for its programmers.

Ubisoft claims that bug-fixing during the development phase can swallow up to 70% of costs. The Commit Assistant has not been integrated into the coding pipeline but is being shared with select teams, as there are concerns that programmers may baulk at an AI that is telling them they are doing their job wrong. Ubisoft wants the AI to speed up the coding process – it wants its coders to treat the AI as a useful tool rather than a hindrance, and intends to be completely transparent about how the AI was trained.

A limitation that can plague any AI-based bug fixing is the problem of bug reporting. AI’s can be trained on data sets to master games, and even become proficient at identifying bugs. But how would an AI report bugs, considering that one of the crucial aspects of bug fixing is having recourse to a well-written bug report?

Open AI’s ChatGPT can converse with humans, answer their questions and is also proficient at fixing code. But it’s even better at bug-fixing when it is engaged in natural language dialogue with the human coder – Ubisoft’s Commit Assistant could well be trained like ChatGPT to communicate with coders to build trust, and playtesting AI’s may need natural language dialogue capabilities to tell humans about game bugs, or even fix them while conversing with programmers, as ChatGPT does.

What AI Implies for the Game Industry’s Future

We have discussed various AI tools that can assist studios in the game development pipeline. In a sense, many of these solutions are meant to reduce manual work and allow developers to focus on the things that really count.

However, AI can also be used in novel ways that have nothing to do with the asset pipeline or game development, and can also empower very small teams to create ambitious games, democratising the game industry. But this revolution can end before it begins as legal issues loom over AI generators – and we will deal with these issues in brief, before discussing how AI can change the game industry’s landscape.

The Legal Wrangle over Generative AI

Multiple lawsuits have been filed against AI-powered image generators. One of the plaintiffs is none other than Getty Images, a behemoth that owns one of the world’s largest repositories of images, vector graphics, videos and other media, and predominantly provides stock photos for corporations and the news media.

Getty’s suit contends that Stability AI, the creator of Stable Diffusion, copied over 12 million images from its stock library without ‘permission or compensation’, as part of an effort to ‘build a competing business’. As we have said before, generative AI tools are trained on vast datasets. But if such data is copyrighted and trademarked, then they arguably need to be paid for – whether the image is supposed to fill out a newspaper column or a corporate brochure, or an AI’s training dataset.

This lawsuit, and another filed by three artists, threaten the continued existence of Stable Diffusion or MidJourney unless the courts rule that providing an AI with copyrighted images solely for training purposes constitutes fair use, especially as AI generators arguably transform the data they are fed with to create original content.

One can argue that such lawsuits have already done enough damage. Litigation takes years and game studios, filmmakers and other media houses that could use such AI tools will be wary of integrating them into the pipeline until the legal tangle is resolved. However, AIs such as Ubisoft’s Commit Assistant and EA’s HyperMotion are arguably immune from litigation, as training data is also generated in-house – legal issues over copyright can be circumvented by sourcing data using the right means.

AI-Powered Innovation in Gaming

As early as 2018, Activision used machine learning to make players improve their gaming skills. Activision’s tool was integrated into Alexa, and helped train gamers to get better at playing Call of Duty: Black Ops 4 (2018). The tool is no longer available, but it was still an interesting experiment in using machine learning and a human-like interlocutor, such as Alexa, to guide gamers through a play session.

While Activision’s experiment was short-lived, Ludo’s solution for de-risking the gaming industry may well become integral to the game maker’s toolkit. As we have mentioned above, Ludo helps developers ideate and develop 2D assets with its image generator. It also has a market analysis tool that can help studios get a good sense of how their game might perform.

The developer can feed the AI-based tool with a proposed game concept, and Ludo will scour its vast database to determine if the idea has been thought of before. This is critical for mobile game developers, who work in a field where games struggle to rise to the top. Ludo can also identify trending genres and top charts, to help developers model their game on ideas and titles that are performing well. Since its launch in 2021, Ludo has more than 8000 developers using it.

Conclusion

In recent years, academic papers about generative AI have been published at an exponential rate and many companies are now working on AI-based solutions not only for gaming, but for other industries too. This spike in research and development has been called a ‘Cambrian explosion’, likening the emergence of generative AI toolsets to the proliferation of animal species during the Cambrian Period 539 million years ago. The game industry stands to benefit immensely from this surge in practical AI solutions.

However, using AI to enhance game development is not without its challenges – generative AI is a nascent field and legal issues loom over it already. Even Ubisoft is treading lightly with its bug-preventive AI so that programmers can gradually accept the tool as a benefit rather than a hindrance.

Despite these challenges, AI has the potential to democratise the gaming industry and act as a force multiplier for small developer teams, empowering them to make ambitious games by using AI to streamline the game development process, circumvent budget constraints, and even innovate with AI tools to create truly unique gaming experiences.

Gameopedia can provide tailored solutions to meet your particular data needs. Contact us to receive useful information and derive actionable insights regarding generative AI techniques, and their impact on the game industry in general and game development in particular.